|

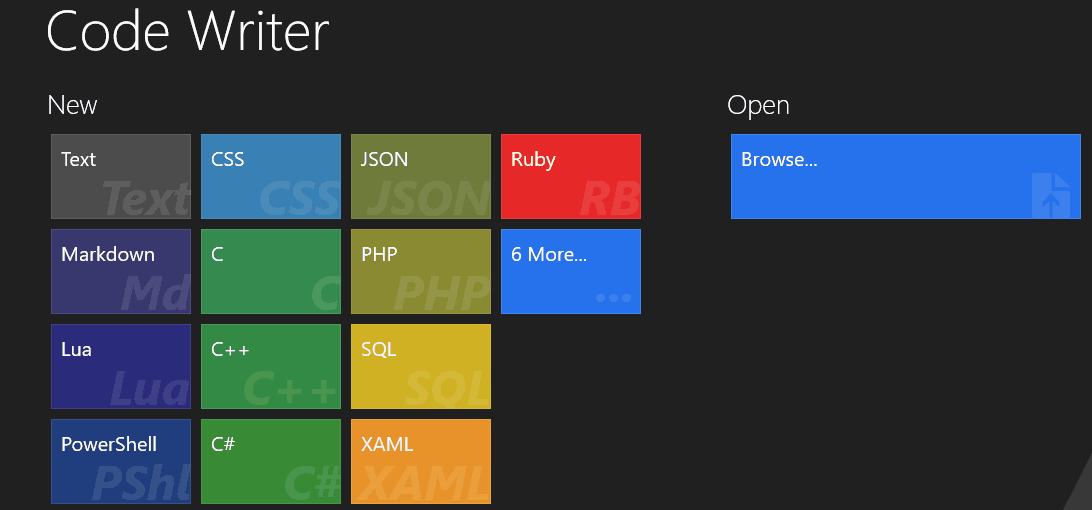

Then, they trained it to translate problem descriptions into code, using thousands of problems collected from programming competitions. Like the Codex researchers, they started by feeding a large language model many gigabytes of code from GitHub, just to familiarize it with coding syntax and conventions. But it performs poorly when tasked with tricky problems.ĪlphaCode’s creators focused on solving those difficult problems. The software can write code when prompted with an everyday description of what it’s supposed to do-for instance counting the vowels in a string of text.

By fine-tuning GPT-3 on more than 100 gigabytes of code from Github, an online software repository, OpenAI came up with Codex. The lab had already developed GPT-3, a “large language model” that is adept at imitating and interpreting human text after being trained on billions of words from digital books, Wikipedia articles, and other pages of internet text.

“It’s very impressive, the performance they’re able to achieve on some pretty challenging problems,” says Armando Solar-Lezama, head of the computer assisted programming group at the Massachusetts Institute of Technology.ĪlphaCode goes beyond the previous standard-bearer in AI code writing: Codex, a system released in 2021 by the nonprofit research lab OpenAI. Researchers say the system-from the research lab DeepMind, a subsidiary of Alphabet (Google’s parent company)-might one day assist experienced coders, but probably cannot replace them. Wouldn’t it be nice if anyone could explain what they want a program to do, and a computer could translate that into lines of code?Ī new artificial intelligence (AI) system called AlphaCode is bringing humanity one step closer to that vision, according to a new study. But there’s a global shortage of programmers.

It controls smartphones, nuclear weapons, and car engines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed